In this blog we look at the NoSQL database, Azure Cosmos DB. Covering its benefits and a specific use case. There has been tremendous growth within the NoSQL market. It is now common to see NoSQL databases as a key technology element when moving into a more digital world.

NoSQL has its advantages over classic databases and is a better fit for things like IoT applications, online gaming and complex audit logs where we can build flexible schemas, easily horizontally scaled. Which is hard to do with something like SQL Server sharding. And if modelled correctly sub-second query response time for globally distributed data sets is possible. Azure Cosmos DB is Microsoft’s offering as a managed service in the NoSQL world and is a market leader.

AZURE COSMOS DB OVERVIEW

Azure Cosmos DB is a globally distributed, multi-region and multi-API NoSQL database that provides strong consistency, extremely low latency and high availability built into the product. If you decide to go for a multi-region read-write approach you can get 99.999% read/write availability across the globe.

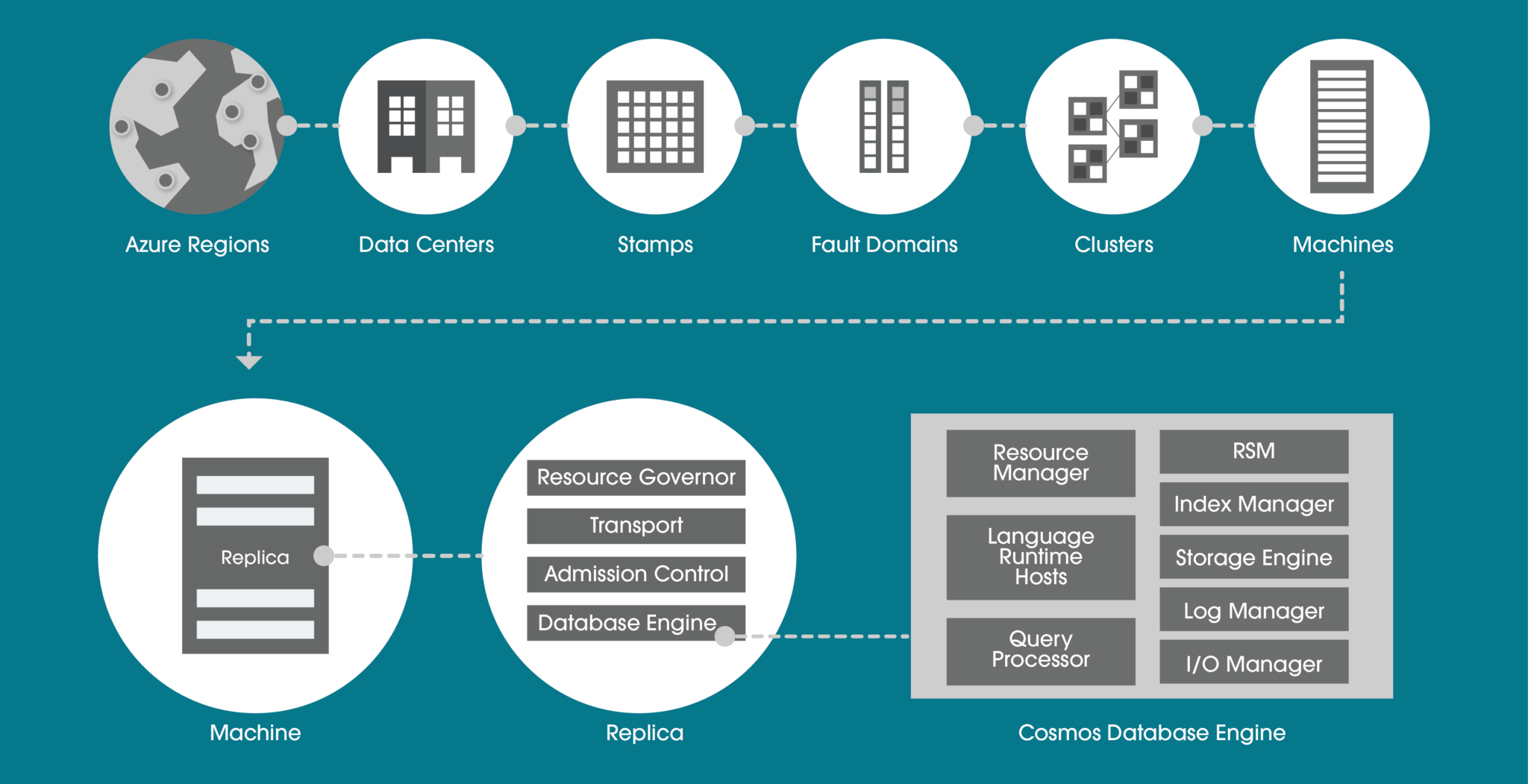

Within a data centre, Azure Cosmos DB is deployed across many clusters, each potentially running multiple generations of hardware. The below diagram shows you the system topology used. The key to a successful Azure Cosmos DB implementation is selecting the right partition key. This will be determined by the query profile (whether it is more read-based or write-based) and query patterns.

AZURE COSMOS DB APIS

There are many APIs available to use within Azure Cosmos DB and each has its own use case that your business may need.

HIGHLY SECURE

For technology decision-makers, security is a very important consideration. You will be asking yourself, does this technology cover all the layers within a security perimeter? Quite simply, Azure Cosmos DB does. This is possible with many different techniques. Let’s look at the more common features.

PRIVATE ENDPOINT

Private Endpoint is usually a must for enterprises. The ability to ultimately use your own private IP address range to connect to services makes using Azure Cosmos DB feel like an extension to your data centre.

You can then limit access to an Azure Cosmos DB account over private IP addresses. When Private Link is combined with restricted NSG (network security group) policies, it helps reduce the risk of data exfiltration.

ENCRYPTION IN FLIGHT

For encryption in flight, Microsoft uses TLS v1.2 or greater and this cannot be disabled. They also provide encryption for data in transit between Azure data centres. Nothing is needed here in terms of extra configuration, it is built into the service.

The same goes for data encryption at rest. Encryption at rest is implemented by using several security technologies, including secure key storage systems, encrypted networks and cryptographic APIs.

MICROSOFT DEFENDER

Microsoft Defender for Azure Cosmos DB provides an extra layer of security intelligence that detects unusual and potentially harmful attempts to access or exploit Cosmos DB accounts.

If you as a business already use Azure SQL and Microsoft Defender, it makes sense to continue with the same approach for the NoSQL environments. You will be alerted for SQL injection attacks, anomalous database access patterns and suspicious activity within the database itself.

MULTI-REGION

Do you need “planet” scale applications? If so, this is the technology for you. With this feature, you can replicate the data to all regions associated with your Azure Cosmos DB account. Typically you pick the regions closest to your customer base for that geographical region. Not only does it cater for this global audience but you also get side benefits such as high availability.

This is because if a region does become unavailable, then another region with automatically handle any incoming request. Thus the 99.999% SLA with the multi-region approach. If this is not enough, you can even enable every region to be writable, and elastically scale reads and writes all around the world.

AZURE COSMOS DB PRICING AND PERFORMANCE

Request Units (RUs) is a metric used by Azure Cosmos DB that abstracts the mix of CPU, IO and memory that is required to perform an operation on the database. The higher the RU, the more resources you have when executing a query. This naturally means a higher cost.

The number of RUs your database needs can be quite tricky to determine. Particularly coupled with the fact you might be using multi regions. These both impact the price.

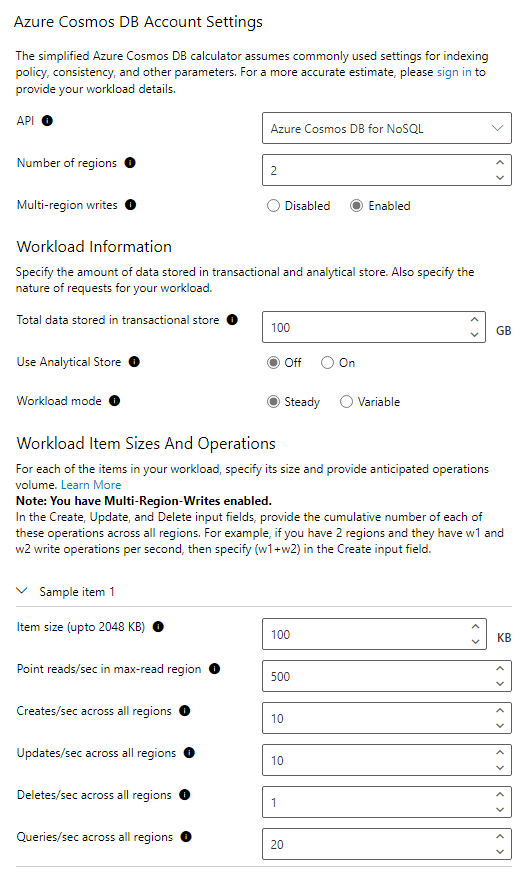

Microsoft has a capacity calculator (https://cosmos.azure.com/capacitycalculator/) to help guide you through this important exercise. Let’s investigate an example where we will need to understand basic requirements such as data sizes and more complex concepts like item size and read speeds which will tell us the RUs needed, thus total cost.

AZURE COSMOS DB CALCULATOR EXAMPLE

For this example, our workload requirements are:

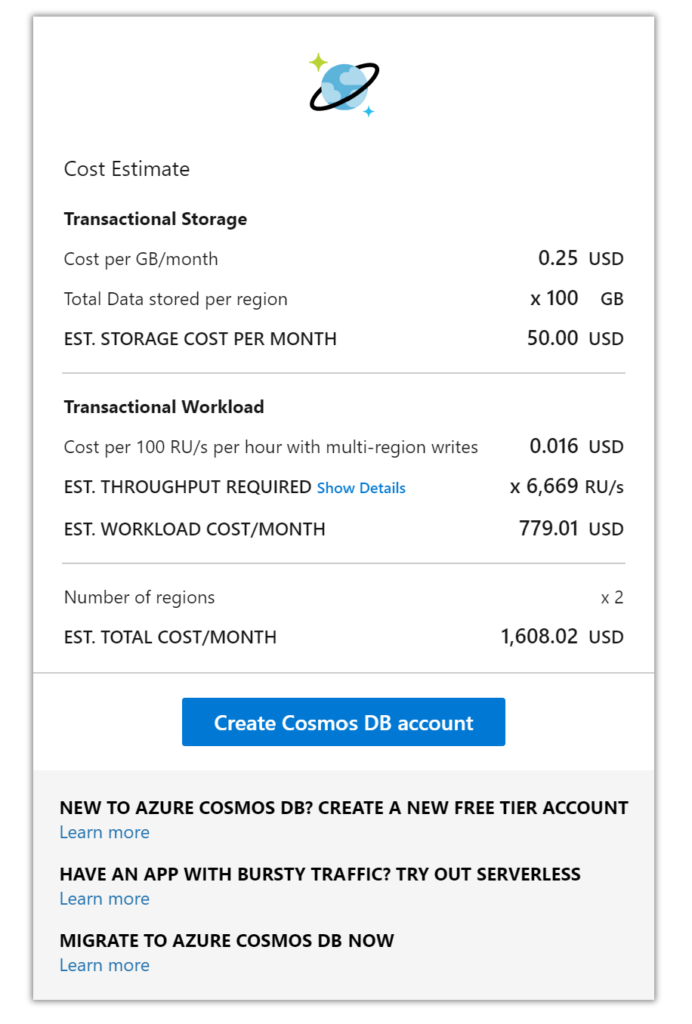

The RU calculation given is approximately 6,699 RUs which has a cost of $779 for the compute. Data storage for 100GB is $50 and adding another region means a total monthly cost of approximately $1608, this is without a reservation policy. If you wanted a 3-year reservation you could save up to 60% of the cost at the time of writing.

This is a very competitive price when you consider not only the performance you are getting from a multi-region write-based database but also the 99.999% SLA, built-in backups and benefits of a cloud-native database. To try and build something equivalent using just virtual machines would cost your business much more.

AZURE COSMOS DB CHANGE FEED

The change feed in Cosmos DB is a persistent record of changes to a container in the order they occur. Change feed support works by listening to a Cosmos DB container for any changes. These changes include inserts and update operations made to items within the container. So, think of the change feed as a persistent record of changes in the order that they occur which we can then use downstream.

EVENT SOURCING PATTERN

Why is this useful? With this feature, you could use an event sourcing pattern for your design which is quite common to see for things like an audit logging system where every state/action must be captured. The idea of event sourcing is that updates to your application domain should not be directly applied to the domain state. Instead, those updates should be stored as events describing the intended changes and written to a store (this being Cosmos NoSQL container). This can easily describe the function of an audit log.

The audit log for software is a critical component today because it ensures security and reliability for the application, especially in regulated markets like finance and insurance. Building this single source of truth where the system is creating an event after every change is very complicated to do with traditional databases like SQL Server or Oracle.

However, with something like Cosmos DB change feed, we can get global scale event sourcing setup quite easily. When you couple this feature with the right partition key and consider that Cosmos DB has financially backed SLAs covering availability, performance and latency, you can see there is great synergy between Cosmos DB and an event-sourcing approach.

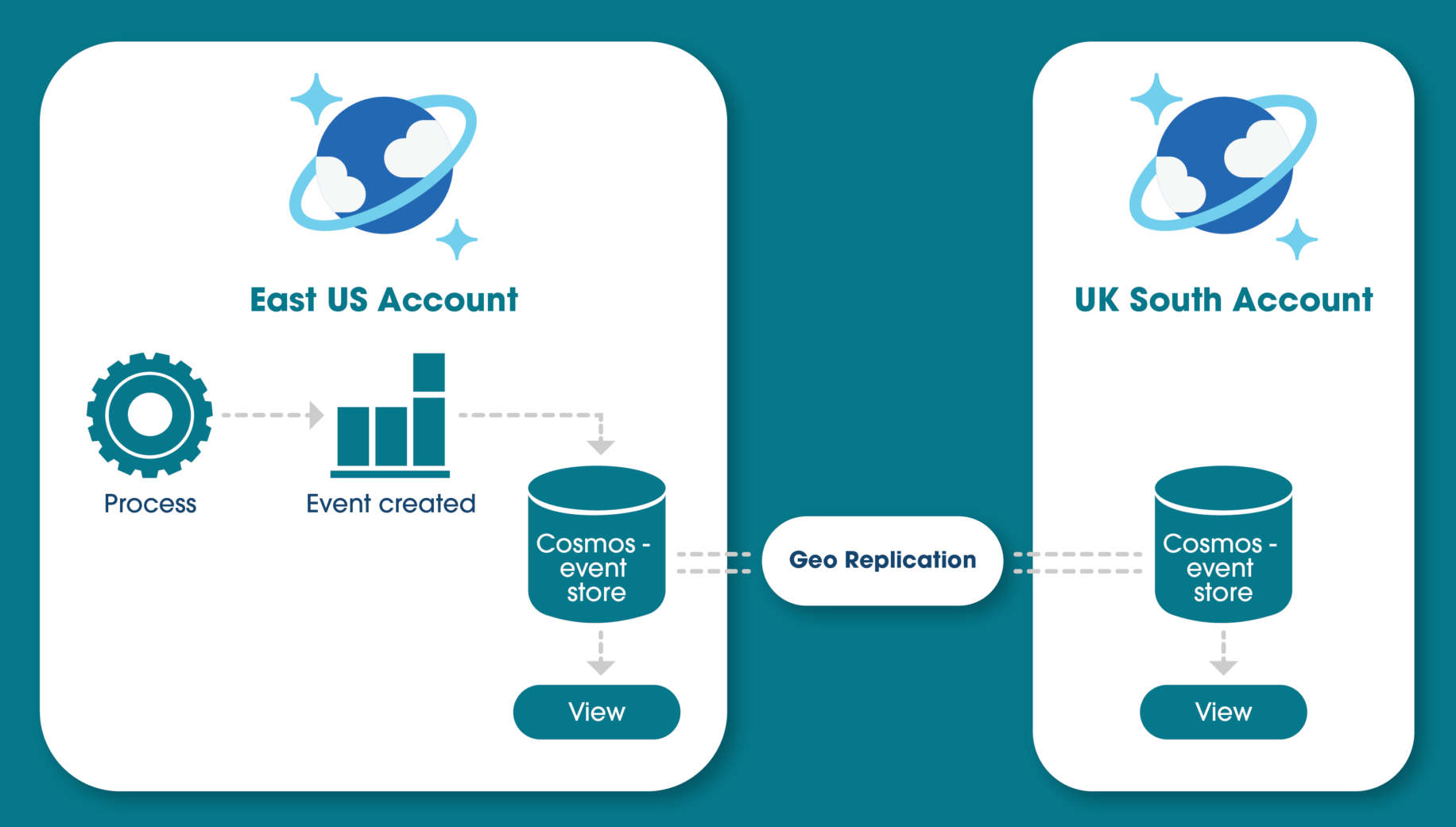

Below is a high-level diagram of how this could look if you decide to use replication of the “event store” to another region. Showing you how to reach a global scale.

The idea of building a materialised view on top of the event store is common practice if, for example, you want to query the updates that happened, rather than everything, and make it available to multiple regions.

CONCLUSION

After reading this article you should now understand how a NoSQL system could fit within your business and all the core features and benefits that Microsoft’s Azure Cosmos DB can give you. From highly available multi-region SLAs and secure application development to its ability to cater for many markets and needs and the event sourcing approach that is possible.

If you are looking for consulting for your Azure Cosmos DB or are looking at using it in your application development, why not get in touch? We are experts in the full Azure development platform.

REFERENCES

The FinOps Foundation defines FinOps as “An evolving cloud financial management discipline and cultural practice that enables organizations to get maximum business value by helping engineering, finance, technology, and business teams to collaborate on data-driven spending decisions.”

FinOps is not a specific technology or single process, it’s a cultural practice whereby everyone involved in cloud usage takes ownership, control, and accountability for cloud spend. Engineers, infrastructure teams, product owners, executives, finance and operations all come together following the FinOps culture in order to improve cost efficiencies, cost visibility and cost predictability going forward. With the overall goal of cloud cost-optimisation.

Cloud adoption and the usage of cloud technologies continue to grow across all organisation sizes and markets. One of the biggest changes a company faces when adopting cloud technologies is switching from a traditional CapEX (Capital Expenditure) spending model to OpEx (Operating Expenditure), meaning cloud spend is tied to day-to-day operational spending instead of larger one-time purchases every few months or years.

The change in cost model introduces its own challenges for organisations, including unpredictable bills, spiralling costs, and cost inefficiencies due to waste. Due to the aforementioned challenges, it’s important that cloud spend is monitored and controlled correctly and that’s where FinOps can help.

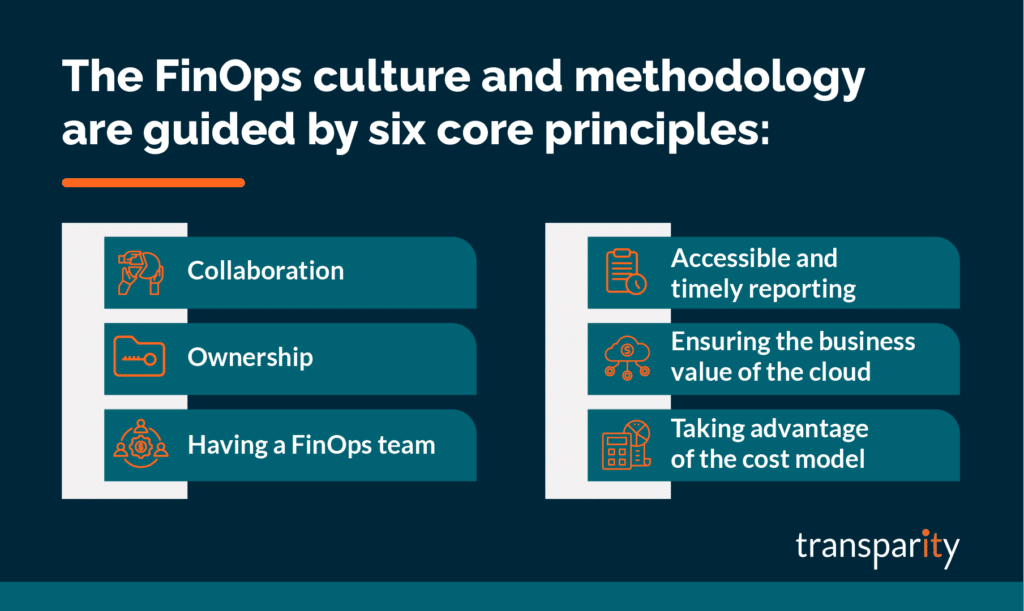

The FinOps culture and methodology are guided by six core principles. All of which are equally as important and should be practiced by everyone responsible for cloud spend. Let’s take a look at each one.

Everyone within a business needs to work together and collaborate to monitor, control and improve cost optimisation. FinOps is not just a focus for the finance team.

Everyone must take ownership of their cloud usage. Product teams should budget and own the cost of their specific products. Engineers and architects must think about costs when designing and building workloads. Software developers should see cloud costs as a trackable metric that’s just as important as other metrics such as performance.

Create a specific centralised FinOps team within your business that can focus on FinOps best practices, and processes and encourage others within the business to focus on their specific FinOps tasks. Similar to governance or security teams whereby they own the processes and best practices, yet everyone within the business is responsible for their specific tasks.

The FinOps team would usually focus on areas such as process creation, utilisation, discounts, negotiations, customisations and licensing.

It’s imperative to have visibility of cost data that is updated in real-time and accessible by everyone at all levels of the business. This helps to drive efficiency and cost-optimisation whilst empowering team members to make decisions quickly.

Alongside cost data, the visibility of resource changes and cloud service activity logs is also very useful as this can explain why a specific cost has increased and assists with accountability.

FinOps and cloud solution cost-saving exercises should always be balanced against your business decisions, desired outcomes and reasons for adopting cloud technologies in the first place. It would be very easy to cut costs from any cloud bill if you blindly ignored the negative effects it would have on performance, speed, agility or user experience.

Be practical with your decisions and technical designs to ensure a workload first and foremost meets your required outcomes. Then see how you can optimise costs within those parameters.

View the OpEx cost model as an opportunity to add value and increase agility instead of something that is a blocker or added risk.

To implement FinOps within your business, you must think of it as a lifecycle that covers three specific phases of FinOps Management:

The first phase of the FinOps lifecycle is ‘Inform’ and this is all about understanding and reporting on your current costs in order to use the information to inform the rest of the business. The more you know about your current spending and cost control, the more informed you will be going forward to make the right changes and decisions to optimise costs.

The key areas to think about include budgeting, forecasting, allocation and visibility. Microsoft Azure includes a cost management service that will assist with many of the key areas of focus including reporting. However, you must ensure you are tagging resources with key information such as owner, product or cost code. The more information you assign to a cloud service, the easier it will be to report and in turn, inform the rest of the business of the gathered information.

The ‘Optimise’ phase is where you start to use the information you’ve previously gathered and take actions that have a tangible effect on your cloud spend. A few key areas of focus include:

The third stage of the lifecycle is ‘Operate’. Operationally you should be looking to continuously improve your FinOps culture and monitor against the goals and processes you have previously defined. Set up meetings between FinOps team members, measure against set KPIs and document improvements that are visible to everyone. Work collectively and improve the culture as you work through the cycles.

As with any introduction of a new culture within a business, don’t expect it to be instantly perfect. Actually, it’s advised to take a “Crawl, Walk, Run” approach when implementing FinOps within an organisation. Take small steps and focus on specific scopes at the beginning to test your processes and approach. As the maturity grows, scale out what does work for you and improve what isn’t working.

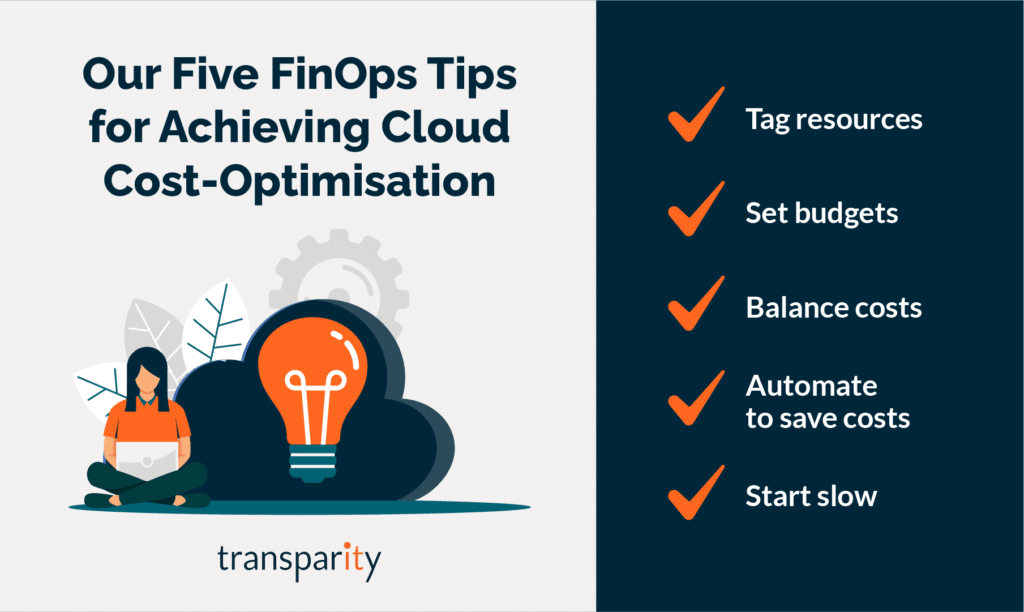

Finally, I will leave with my top 5 tips for FinOps:

With a team of experts dedicated to infrastructure and the Azure cloud, our specialists have designed a full offering to help any organisation, no matter at what place in the journey, understand and implement FinOps. If you are interested in cost-optimisation and better cloud financial management, why not get in touch and find out how we can help.